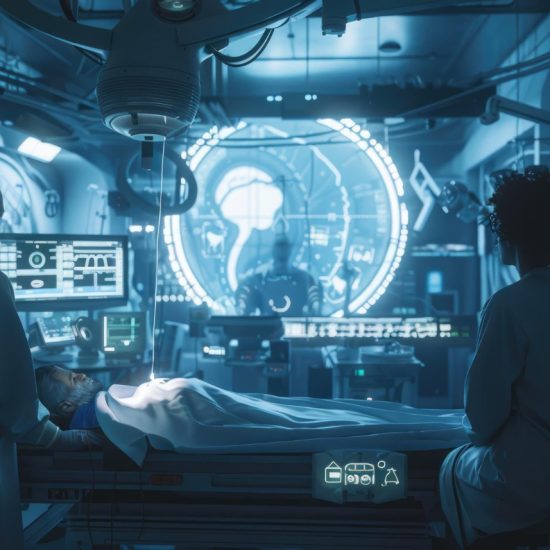

AI now powers clinical decisions in hospitals worldwide. Far from being a future aspiration, it actively assists radiologists, pathologists, and other clinicians in diagnosing conditions faster and more accurately.

Everyone depends on healthcare at some stage of life — from routine check-ups to emergency interventions. Today, AI tools analyse medical images, read scans, and support diagnostic decisions as part of everyday clinical workflows. This shift brings not only remarkable opportunities but also serious responsibilities for organisations, clinicians, and regulators alike.

How AI Transforms Diagnostic Practice

Radiology and Pathology Lead the Way

Radiology and pathology are ideal fields for AI adoption. Both disciplines rely heavily on pattern recognition and image interpretation — two areas where AI excels. AI systems trained on millions of scans can detect tuberculosis, stroke, lung nodules, and breast cancer at a level comparable to specialist clinicians. Similarly, in pathology, AI tools analyse digitised tissue slides, identify cancer cells, quantify biomarkers, and prioritise urgent cases.

Importantly, these tools do not replace clinicians. Instead, they function as decision-support systems. AI models analyse images immediately after capture and flag potential abnormalities. A radiologist or pathologist then reviews the findings and issues the final diagnostic report. This human-AI collaboration reduces reporting times and eases administrative burden, freeing clinicians to focus on complex judgments and patient care.

The measurable impact is significant. AI-assisted workflows have cut stroke treatment delays from hours to minutes. Furthermore, tuberculosis detection has accelerated in low-resource settings, including high-density prison populations. Early cancer diagnosis has also improved considerably. In health systems facing workforce shortages and rising demand, these gains are not merely convenient — they are necessary.

Organisational Change Beyond Efficiency

Technical capability alone does not guarantee AI’s success. Introducing AI into a hospital requires redesigning workflows, redefining professional roles, and building trust among clinical staff.

Effective organisations combine human strengths with technological capabilities. AI excels at processing vast volumes of data quickly and consistently. Clinicians, however, contribute contextual understanding, ethical judgment, and accountability. When AI acts as an assistive tool, it augments diagnostic accuracy and reduces repetitive tasks. When organisations integrate it poorly, friction, mistrust, and overreliance follow.

Clinician acceptance is therefore critical. Concerns about job displacement, de-skilling, and opaque decision-making remain significant barriers. Even highly accurate tools face underuse when they are difficult to interpret or lack endorsement from senior clinicians. Moreover, leaders trained in AI literacy and committed to transparent communication play an essential role in bridging adoption gaps.

Where AI Falls Short

Real-world deployment exposes important limitations that controlled testing does not always reveal.

One key risk is automation bias — the tendency to over-trust algorithmic outputs. Incorrect AI suggestions can increase diagnostic errors, even among experienced clinicians, particularly when errors go unclearly signposted. Additionally, pathology AI systems can misinterpret tissue contamination that human experts would easily disregard.

Bias in training data compounds these risks further. Many models train on historical medical data. When those datasets reflect long-standing inequalities — underrepresentation of women, ethnic minorities, and younger patients — those disparities reproduce at scale. As a result, underserved populations face higher risks of underdiagnosis or delayed treatment, amplifying existing health inequities.

Ethical and Legal Accountability

The Black Box Problem

AI’s integration into diagnostics complicates traditional accountability frameworks. Clinicians bear moral and legal responsibility for patient outcomes. Yet when AI contributes to clinical decisions, accountability spreads across clinicians, developers, institutions, and regulators.

Opacity in AI systems — the “black box” problem — further weakens accountability. If clinicians cannot understand how an algorithm reached a conclusion, they struggle to critically evaluate its output. This concern has shaped regulatory approaches globally.

Regulatory strategies vary considerably across regions. The US FDA has historically approved only “locked” AI models that do not change after deployment, prioritising predictability over adaptability. While this supports safety, it limits learning. Locked systems cannot adapt to new data or correct for emerging biases. By contrast, the European Union’s AI Act introduces risk-based obligations for healthcare AI. The United Kingdom adopts a more flexible, innovation-friendly approach through regulatory sandboxes, allowing firms to test products that challenge existing legal frameworks. Nevertheless, legal systems continue to lag behind technological progress.

The Path Forward

Avoiding AI because of fear of error risks forfeiting substantial patient benefits. Instead, the focus must shift to responsible implementation.

Responsible implementation requires rigorous validation across diverse populations, continuous monitoring after deployment, and clear accountability frameworks. It also demands transparency with both clinicians and patients, along with sustained human oversight.

Ultimately, AI should serve as an intelligent partner — not a replacement — for clinical expertise. When organisations embed it thoughtfully and guide it by ethical principles, AI can reduce diagnostic error and extend specialist care to underserved populations. Furthermore, adaptive regulation can help build more sustainable health systems over time.

The challenge for tomorrow’s healthcare leaders is clear: design systems where humans and machines work together — safely, ethically, and effectively — always in the service of patient care.

Pingback: Origins 2026 Conference Opens Registration for Scientists / March 11, 2026

/