The Growing Mental Health Crisis

Mental health care is facing a serious global shortage. According to the World Health Organization, nearly one in eight people worldwide lives with a mental health condition. Yet trained professionals remain far too few to meet this surging demand. In the United States alone, over 160 million people reside in areas with a shortage of mental health providers. Furthermore, roughly half of all adults experiencing mental illness never receive any treatment at all.

This gap is not just a logistical problem. It is a human crisis. As a result, researchers, clinicians, and technologists are turning to artificial intelligence to bridge this divide — quietly, but with growing urgency.

How AI Is Stepping In

Artificial intelligence is entering mental health care from multiple angles. First, it is improving early detection. Then, it is automating routine tasks. Moreover, it is enabling round-the-clock patient support. These combined capabilities make AI one of the most promising allies mental health professionals have seen in decades.

Unlike traditional tools, AI learns continuously from data. It can spot patterns in speech, behaviour, and physiological signals that even experienced clinicians might miss. Consequently, it offers the possibility of identifying mental health conditions weeks or months before symptoms become clinically obvious.

Key AI Tools Transforming Therapy

Early Detection and Diagnosis

Machine learning algorithms now analyse electronic health records, social media activity, and wearable device data to flag early warning signs. Apps that track typing speed, phone call frequency, and sleep patterns can detect the onset of depression or anxiety well before a patient seeks help.

Additionally, AI diagnostic tools show impressive accuracy. Studies reveal that AI systems can identify mental disorders with 68–100% accuracy. Traditional diagnosis, by contrast, often struggles — with depression diagnosed correctly only around 50% of the time in psychiatric settings.

AI Chatbots and Virtual Support

AI-powered chatbots like Woebot deliver real-time coping strategies, therapeutic exercises, and emotional support — any time of day. A recent clinical trial of Therabot, a fully generative AI chatbot developed at Dartmouth, showed significant symptom improvements in patients with major depression, anxiety, and eating disorders.

These tools do not replace therapists. Instead, they function as always-available companions. They help patients practise therapy skills between sessions, complete homework assignments, and track mood shifts over time. Therapists, therefore, gain richer insight into a patient’s daily life before each consultation.

Reducing the Administrative Burden

Beyond clinical tasks, AI also tackles paperwork. It automates scheduling, note-taking, billing, and documentation. This frees therapists from hours of administrative work every week. As a result, professionals spend more time with patients and less time behind a screen. That shift alone can meaningfully reduce burnout — a serious problem in this field.

The Human-AI Partnership Model

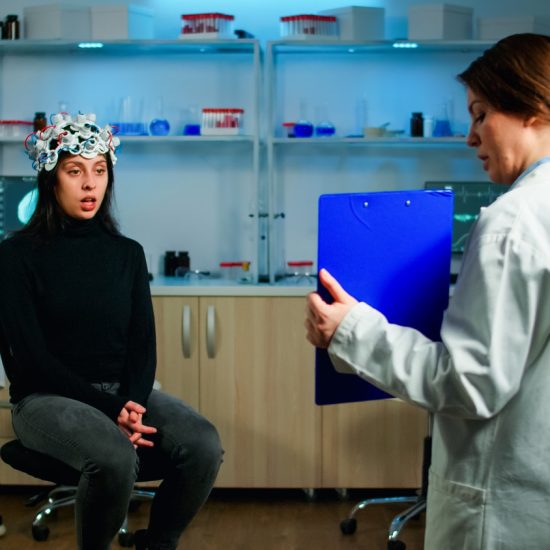

Most experts agree: the future of mental health care is not AI replacing therapists. Rather, it is a hybrid model where both work together. AI handles data analysis, pattern recognition, and routine support. Meanwhile, human clinicians bring empathy, nuance, and clinical judgment to every session.

Researchers at Kaiser Permanente and leading universities advocate for this blended approach. They stress that clinicians must remain central to care decisions. AI assists and informs — it does not override. This collaboration ultimately produces better, faster, and more personalised outcomes for patients.

Challenges and Ethical Concerns

Despite its promise, AI in mental health raises legitimate concerns. Data privacy stands out as the most pressing issue. Mental health data is deeply sensitive. Moreover, many AI health apps fall outside standard privacy protections like HIPAA, leaving patients potentially vulnerable.

Bias is another major concern. AI models train on existing datasets. If those datasets exclude certain populations, the resulting tools may deliver inaccurate or inequitable care. Developers, therefore, must involve diverse communities and clinicians in building and auditing these systems regularly.

Transparency also matters. Clinicians rely on clear reasoning when making decisions about patient care. Many AI tools, however, operate as “black boxes,” offering predictions without explanations. Until interpretability improves, clinician trust in AI-driven insights will remain limited.

The Road Ahead

AI in mental health is no longer a distant possibility. It is already here, working quietly in therapy apps, diagnostic platforms, and clinical decision tools. As technology advances, its role will only grow. Wearables will monitor mood in real time. Large language models will synthesise complex patient data instantly. Personalised care pathways will become the norm rather than the exception.

However, success depends on responsible development. Involving mental health professionals in building these tools is essential. Equally important is protecting patient privacy, addressing bias, and maintaining human compassion at the centre of every interaction. AI is a powerful lifeline — but only when held in the right hands.